How to monitor Go applications with VictoriaMetrics

Monitoring is fun! It is so fun that once you started you’ll never leave any of your apps without some fancy metrics. But sometimes beginners are afraid to touch this area, mostly because the rest of the tech is overwhelmed with complexity, standards, and conventions.

In this article, we’ll try to show how simple it could be to start using metrics, storing them in VictoriaMetrics TSDB and visualizing via Grafana.

Instrumenting app with metrics

We’ll start by implementing a very simple go web app that shows articles about observability. As an endless source of nice and good articles we’ll use Google search!

It is a web server that serves only 2 pages: home page with greeting and /articles page with results from google search.

Run it via command go run . and try to visit pages:

Now let’s imagine you have run such a web service for a couple of days already. Could you answer the following questions:

- How frequent users visit your service?

- Which page is the most popular?

To get the answers we need to introduce application metrics! Let’s instrument our service with some of these via special Go library github.com/VictoriaMetrics/metrics:

go get github.com/VictoriaMetrics/metricsThe questions we need to answer are general for all requests we serve, so it sounds natural to have a single place in code where we can add metrics:

The piece of code above is usually named as a middleware — wrapper for handlers where we can add some additional logic or actions. It could be logging, auth, privileges checking or metrics!

Counter

Counter metric type is used to capture number of events. If we want to know how many times handler was called we need to add a counter metric to the middleware:

Method metrics.GetOrCreateCounter accepts a metric name and returns counter object. You have to be serious about the metric name - keep it descriptive and clear about what it measures. In our case, metric name means the following.

“requests_total” consists of two things:

- requests — the entity we measure. You can be more specific here by saying “requests_success” (for only successful requests) or “request_errors” (for errors only). Metric name is a very important thing and it must be clear to every person who reads it what is actually measured, just like variable names in programming.

- _total — suffix which explicitly says we deal with counter metric type which only grows up and never goes down. And it is super cool because by reading only the metric name you can figure out its nature.

- {path=%q} — curly braces mean we are describing labels. Labels are something like metadata, dimensions, additional information we want to put into the metric. In this case, we put the URL path into the “path” label, so we’ll be able to distinguish the number of events for different URLs. It is allowed to have multiple labels separated with comma:

requests_total{path=%q, status_code="%d", browser=%q}. Try to keep the number of labels relatively small (less than 30) in order to keep the data model simple.

Hints:

- More about naming convention may be found here;

- Naming convention is not strict (except allowed chars) and any metric name would be accepted by VictoriaMetrics or Prometheus. But convention will help to keep names meaningful, descriptive and clear to other people. Following convention is a good practice;

- Every unique combination of metric name and its labels form a new time series. For example,

requests_total{path="/", code="200"}andrequests_total{path="/", code="403"}are two different time series. The more unique time series you have, the more resources VictoriaMetrics or Prometheus will need to process them. The number of all unique labels combination for one metric called “cardinality”.

So method metrics.GetOrCreateCounter will return us a metric of type counter with current URL path as a label and we can call its .Inc() method. It means that the returned object will change its state from 0 to 1 and every subsequent .Inc() call will increment the previous value by one. Both methods GetOrCreateCounter and Inc are thread-safe and may be accessed from multiple goroutines.

Hints:

- If performance matters, consider caching

GetOrCreateCounterorNewCounterresult into variable to avoid unnecessary allocations and lookups; - If you need to increment by some value (for batches processing, for example), use

.Add(n int)method instead.

The final source code state may be found here.

Storing metrics

Now we have metrics in our application but how to see them? The common way is to expose metrics and their values in plain text format via HTTP handler:

After adding this handler restart the application and check http://localhost:8080/metrics page. The page should show a bunch of metrics in the text format like the following:

requests_total{path="/metrics"} 1

go_memstats_alloc_bytes 240288

go_memstats_alloc_bytes_total 240288

go_memstats_buck_hash_sys_bytes 3683

…There are a lot of metrics on the page but only one metric is ours requests_total{path="/metrics"} 1. It is easy to understand its meaning — the page was served exactly one time. Now open a different page like http://localhost:8080/foobar and then get back to /metrics once again. The output will be similar to the following:

requests_total{path="/favicon.ico"} 3

requests_total{path="/foobar"} 1

requests_total{path="/metrics"} 2From the output above we can say that we served multiple pages, actually. We expected to see “/foobar” and “/metrics” in label values, but “/favicon.ico” is something new. Turns out, that browser (Chrome in my case) automatically generates such request to get the favicon. And now with freshly added metrics we know browser does this!

So now we have these metrics page but something is still missing. Could you say looking on it “when we started to serve requests” or “how frequent we serve requests”? The thing which is missing is time — we don’t have the retrospective view on these metrics. But the one thing we can say for sure — metrics we see on the /metrics page are showing current metrics values. It means, there is a notion of time when we see that page and if we open&save this page every minute or so, we’ll see how metrics are changing in time. This is a basic principle of a pull model — TSDBs like Prometheus and VM are checking application metrics page with some interval and store their values with the corresponding timestamp.

Hints

- pull model (when database gathers the application’s data) is opposite to push model (when applications writes data into database). Both have their pros&cons and discussion about this deserves a separate article;

- pull model got popular because of Prometheus and was proved as reliable for metrics collection;

- “pulling” metrics from the application is also called “scraping” ;

- VM supports both metrics collection models, so part of service can “push” metrics into VM while the rest can be “scraped”.

So let’s configure VictoriaMetrics to collect metrics from the application. We’ll need two things for this:

- Docker to run VM image (or run just a binary if it is more convenient for you)

- Scraping configuration file with our application address.

Let’s begin with the configuration file. For the sake of compatibility, VictoriaMetrics supports the same specification as Prometheus, so you can use one configuration file for both systems. Let’s create the config file:

Save configuration to the file and run VictoriaMetrics image:

docker run -it --rm -p 8428:8428 -v /path/to/config.yaml:/etc/config.yaml \

victoriametrics/victoria-metrics -promscrape.config=/etc/config.yamlYou should see something like following:

2020–11–29T12:38:04.182Z info VictoriaMetrics/lib/promscrape/scraper.go:86 reading Prometheus configs from "/etc/config.yaml"

2020–11–29T12:38:04.189Z info VictoriaMetrics/lib/promscrape/scraper.go:320 static_configs: added targets: 1, removed targets: 0; total targets: 1

2020–11–29T12:38:04.941Z info VictoriaMetrics/lib/storage/partition.go:232 partition "2020_11" has been createdHints:

- Also you can visit http://localhost:8428/ to see a greeting;

- Visit http://localhost:8428/targets to get the current list of targets to scrape.

It would mean VictoriaMetrics successfully parsed the config file and started to scrape target with 1s interval. VictoriaMetrics is a database for storing time series data, the actual fun comes when we visualize collected data. And the best tool I am aware of in this area is Grafana! So let’s run Grafana container:

docker run -p 3000:3000 grafana/grafanaVisit http://localhost:3000/ and log into Grafana with the default username admin and password admin. Grafana is an observability platform and supports a lot of different data sources. We’re interested in Prometheus data source which allows to execute PromQL queries against configured URL and this works perfectly for VictoriaMetrics. Let’s create a new datasource:

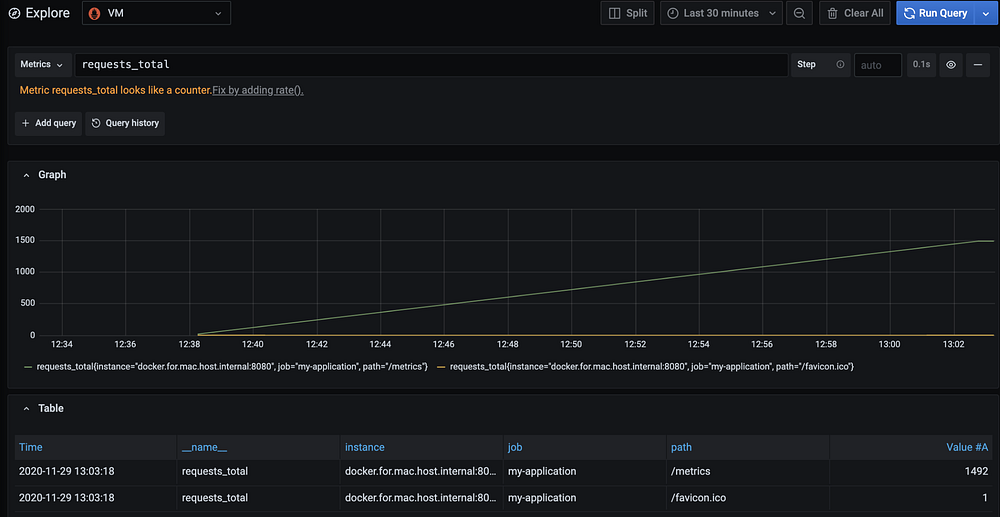

As a URL we need to set VictoriaMetrics address (for MacOS use http://docker.for.mac.host.internal:8428 address) and press Save&Test button. It should become green and then caption “Data source is working” will appear. Now we’re ready to plot some metrics! Go to http://localhost:3000/explore page, choose VictoriaMetrics datasource on the top of the page and type requests_total into the query field:

From the picture above we see that VictoriMetrics started to scrape metrics at 12:38, request returned us two time series:

- requests_total{instance=”docker.for.mac.host.internal:8080", job=”my-application”, path=”/metrics”}

- requests_total{instance=”docker.for.mac.host.internal:8080", job=”my-application”, path=”/favicon.ico”}

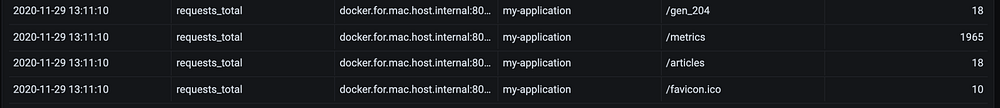

They’re almost identical except the “path” label and we can say that page “/metrics” was served 1492 times, while “/favicon.ico” was served only once. Now let’s open http://localhost:8080/articles page in our application and refresh it multiple times. Then get back to Grafana page and press the “Run Query” button once again. In my case I see two new time series:

- requests_total{instance=”docker.for.mac.host.internal:8080", job=”my-application”, path=”/articles”}

- requests_total{instance=”docker.for.mac.host.internal:8080", job=”my-application”, path=”/gen_204"}

The first one with path="/articles" is expected. But path="/gen_204" is something strange and we didn't open that page. It turns out this request is generated by some javascript on the google page — TIL!

So we can answer now the question “Which page is the most popular?”, but what about “How frequent users visit your service”? To answer the question let’s make run a different query:

In order to understand what happens on the picture above let’s decompose query sum(rate(requests_total)) by(path) :

- The first step is to get all time series with name

requests_total; - Then calculate

rate— basically the “speed” of value changing; - And finally sum results with grouping by

pathlabel.

As a result, we see a couple of time series with only one label path we chose to group by. And here’s what we can say about the graph:

- Page “/path” has rate 1 — means it is requested once every second. And we know why — it is VictoriaMetrics scraping the target.

- All other pages seem to be rarely requested but there was a spike at 13:08–13:09 — moment when we opened multiple times the “/articles” page.

Hints

- We executed our queries in Grafana’s Explore mode which was designed for quick ad-hoc queries. If you want to make graphs permanent — build a dashboard!

- Query language we used here is called MetricsQL. It is very similar to Prometheus query language PromQL but has differences. Learn more about PromQL and MetricsQL here.

- VictoriaMetrics also exposes its own metrics on http://localhost:8428/metrics page. Add it to the scrape targets and explore via official Grafana dashboard!

Summary

In the application we used only one type of metrics supported by the library — counter. There are three more types we can use:

- gauge — for values which could go up and down, such as memory usage, CPU load or temperature;

- summary — complex metric type to track distribution of values. For example, page load duration or body size.

- histogram — is similar to summary type but has a different data model, which allows to aggregate histogram values across multiple service replicas.

All of these types are very handy in special situations, so always think in advance which of these types fits the best.

Want to learn more about VictoriaMetrics? Visit the following links: